All articles

A CEO & CISO's Joint Strategy for Managing Tension Between Innovation and Security

Rayson Technologies CISO Andrew Wylie and CEO Jason Pugh explain how to manage AI risk with a hybrid approach that pairs human intuition with intelligent, innovative technology.

Human intuition is an amazing thing. But we aren't fast enough on our own. We must pair that irreplaceable human insight with tools that can monitor, check, and alert us at machine speed.

As enterprises race to deploy AI, innovation has outpaced security, creating a fundamental tension between the business imperative to innovate and the need to stay secure. With old security playbooks falling short, a new, hybrid path forward is emerging—one that pairs human oversight with intelligent technology.

That's the approach championed by Andrew Wylie, CISO at Rayson Technologies. A senior IT and cybersecurity professional with over 25 years of experience, Wylie, along with Rayson Technologies's CEO, Jason Pugh, advocates a two-pronged first line of defense that augments human intuition with tools that operate at machine speed.

"Human intuition is an amazing thing. That gut feeling that something isn't right," Wylie says. "But we aren't fast enough on our own. That's why we must pair that irreplaceable human insight with tools that can monitor, check, and alert us at machine speed." The reality is that traditional governance is ill-equipped to address the novel threats posed by AI, he explains. But at the same time, purely technical solutions are also incomplete.

Growing pains: Pugh explains that the industry is facing a steep learning curve, similar to the early days of the internet. "If you look at information security ten or twenty years ago, some people thought, 'Just unplug the network cable, and that's good enough,'" he says. "We're going to have very similar learning pains to figure out security in this space because I don't think anybody really has the perfect answer."

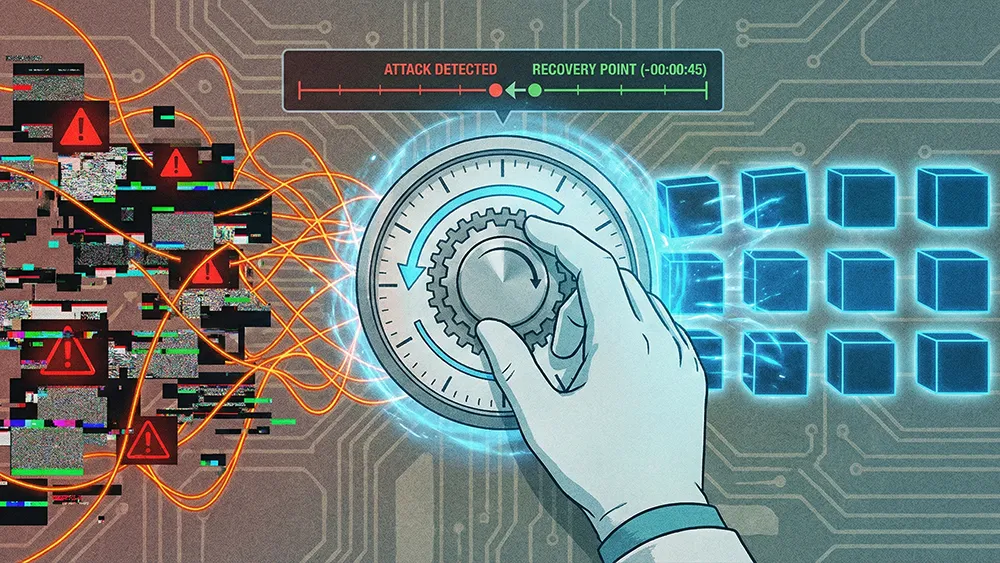

Haystack, meet needle: The scale and stakes of AI have changed the rules, leaving many executives without a clear disaster recovery playbook, Wylie explains. The sheer size of AI models makes comprehensive data vetting nearly impossible. "The larger ones have hundreds of billions of parameters. So how can you vet all the data going into that model? It's the whole needle-in-the-haystack problem," he says.

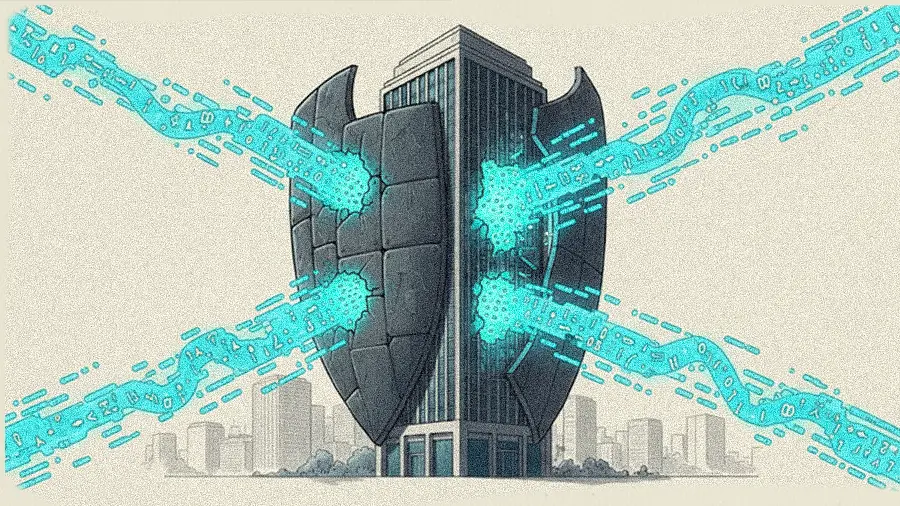

Meanwhile, the risks are amplified in specialized fields, where even minor compromises can have severe real-world consequences. "What happens if that highly specialized model is compromised, even just the smallest amount? That could have huge implications," Wylie cautions. Even emerging technical solutions can be flawed, Pugh continues. While some use one model to validate another, the approach has a critical vulnerability: "if you're injecting things, that's the problem. You could be refining it with bad data and not even know it," he says.

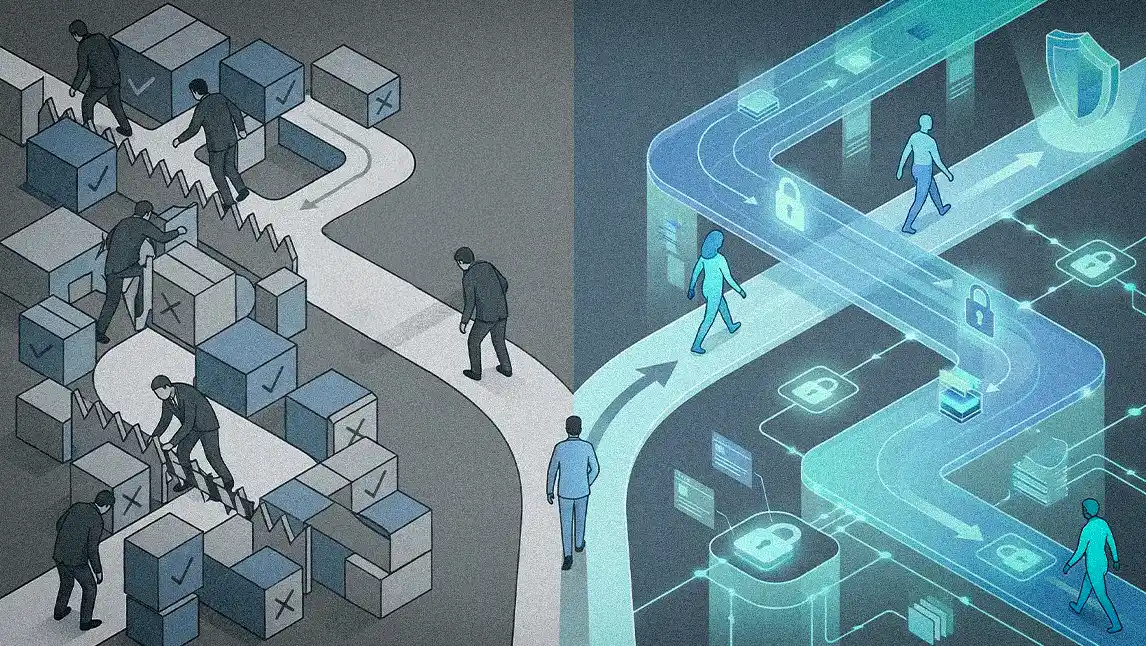

According to Wylie, the most urgent priority is a holistic, step-by-step strategy that begins with leadership alignment on risk and a culture of open communication. Throughout his career, he's seen a broad spectrum of responses, from organizations banning it across the company to those mandating AI agents for every consultant.

Start with the C-Suite: The key is to start small and build a foundation of policy and awareness, Wylie says. "The first step is to take that risk-based approach with the senior leadership in the organization. Try to understand, from an organizational standpoint, what the risk tolerance is. And then start to put together policy and procedures around that. Focus on a small toolset to start. Just dip a toe in the water."

Foster the culture: "Try to encourage collaboration from a security perspective," Wylie continues. "Say, 'Hey, guys, this is new. We know you'll have a lot of questions. Don't be fearful.' Just like you're trying to teach phishing security awareness, get them to keep and maintain that open dialogue."

Permissions and parallels: Managing AI doesn't require reinventing the wheel, Pugh adds. Instead, it involves applying familiar concepts. "You don't allow everybody on the internet to just go access your OneDrive, for example. You have those restrictions. So we're talking about a different mindset on this, but it's a similar approach."

Ultimately, the conflict between "go faster" and "be secure" isn't a problem to be solved, but a productive tension to be managed, the two conclude. A hybrid approach relies on constant, open communication and a shared understanding focused on building resilience to navigate risk rather than eliminate it. Or, as Pugh puts it, absolute security is an illusion. "If a human made it, a human can break it. So when we talk about security, there's always a backdoor. It's our job to mitigate those backdoors while still being innovative."