All articles

Why AI Governance Must Stop Being The Police And Start Enabling Innovation

Xin Tu, a Risk Executive in AI, Data, IT, and Cyber, Financial Services, explains why governance teams that position themselves as blockers get routed around, and how redesigning frameworks for speed and risk classification turns governance into the accelerant enterprises need.

We're trying to put structure and maturity in place while the plane is already taking off. That's the reality of AI and data governance right now.

Governance teams in financial services are operating in two modes simultaneously: responding to live regulatory demands while trying to build the proactive frameworks that agentic AI requires. The result is what one risk executive describes as putting the wheels on while the plane is trying to take off. And the teams that still show up as the compliance police are finding that business units simply work around them.

Xin Tu is a Risk Executive in AI, Data, IT, and Cyber, Financial Services, with experience leading data governance and IT audit at major financial institutions. She also serves on the Global Editorial Board of CDO Magazine, sits on AI and CDO advisory boards, and participates in CDMC and AI governance working groups at the EDM Council. Her career spans audit, risk oversight, and first-line governance across banking, broker-dealer, and payment network environments.

"We're trying to put structure and maturity in place while the plane is already taking off. That's the reality of AI and data governance right now," says Tu. The pressure comes from two directions at once. Enterprises face urgency to deploy agentic AI capabilities as products, while employees simultaneously adopt AI tools to automate their own daily work. Both create risk. And both move faster than annual or semiannual compliance cycles can govern. Tu says the stakeholders closest to these use cases are increasingly aware of the heightened risk, but that awareness has not yet spread across the broader enterprise, making AI and data fluency programs even more critical.

- Stop saying no: The biggest barrier to effective governance is perception. When teams see governance as the function that shuts things down, they stop engaging with it. "The governance function cannot be an obstacle anymore. It has to be part of the solution," Tu says. "Everyone wants to innovate. If you are not enabling that, you'll get left behind because teams are experimenting whether you know about it or not. They just don't want to tell you."

- Show up differently: Repositioning requires a change in both messaging and behavior. "You have to say, I am not here to tell you no. I'm here to work with you along that journey to make sure we can make this happen in a responsible way," she explains. "You have to be able to elaborate the risk, explain the impact of choosing a different path, and offer a responsible alternative. It's not about shutting things down. It's about finding the pass that works."

The human-in-the-loop problem compounds the challenge. Tu observes that in high-volume environments, human oversight quickly degrades into rubber-stamping. After a few months without catching errors, reviewers grow complacent and stop scrutinizing outputs. Her solution is risk-based triage.

- Classify and automate: "You may not need to check every single output for the low-risk use cases. Use a sampled approach," Tu recommends. "But for the high-risk cases, you have to establish a mechanism. Automate the common checks you already know to look for, and then spot-check the ones you haven't anticipated." The goal is directing limited human attention where it matters most, rather than spreading it thin across every output.

- Govern like a manager: For probabilistic, nondeterministic systems, Tu draws a direct parallel to managing human employees. "Just like humans, we're told to follow policy and procedures, but we can still deviate. So you teach the machine the same way. Feed it your code of conduct. Tell it what it can and cannot do and the consequences of not following," she says. Major banks are already assigning human managers to oversee agent workflows and behavior, treating AI systems with the same supervisory rigor applied to employees.

Tu has seen firsthand that governance can accelerate innovation when designed correctly. In a previous role, her team redesigned an AI governance framework that streamlined documentation requirements, centralized intake and risk assessment into a single system, and reduced the friction that had been slowing teams down. The result was faster approvals without sacrificing risk coverage. But she emphasizes that the first iteration is never the final one. "Take the feedback, redesign. It's an iterative approach with a lot of stakeholders."

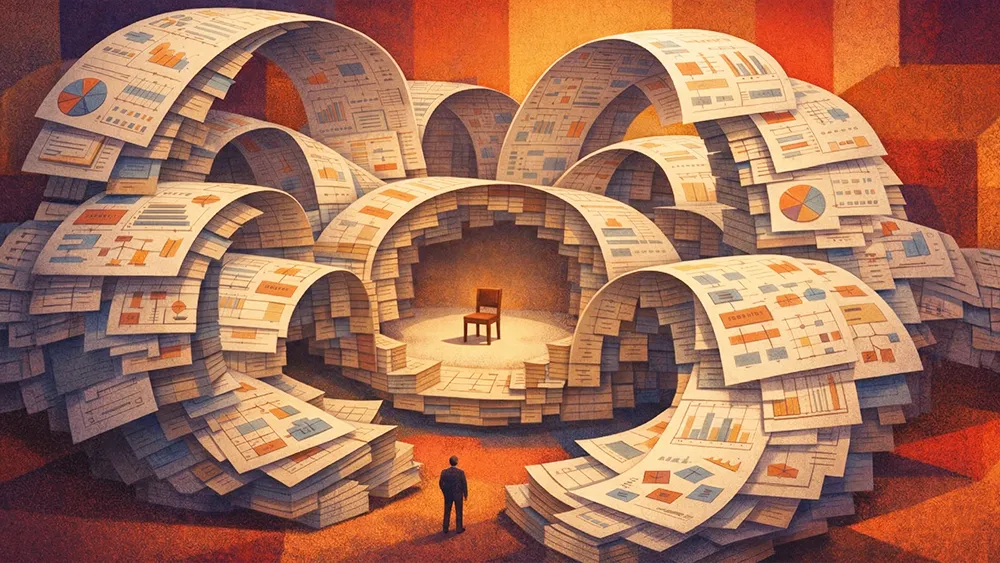

The deeper problem, Tu warns, is that enterprises are aggressively funding AI while neglecting the foundational data infrastructure it depends on. Decades of accumulated technical debt sit in what she calls "a black hole that no one wants to touch," and the hard, unglamorous work of cleaning up data governance receives far less investment than the next visible AI deployment.

"Everyone is chasing the shiny object," Tu says. "But the reality is, your AI algorithm can be running perfectly, and it still won't deliver outcomes if the fundamental data problems aren't fixed. It's the entire ecosystem that supports AI, and that's not anything sexy. No one wants to throw money at it. But there's no way around it. It's not a nice-to-have anymore. It's a fundamental necessity."