All articles

Data Governance Fails When Companies Document Systems But Never Define Who Owns the Decision

Ivan Ruskevich, Principal Consultant at RaspberryTea.ai, explains why data governance only works when it is designed around ownership, accountability, and decision-making clarity rather than tooling, catalogs, or checkbox compliance.

Data governance is not really about the data, and even less about the tools. It’s about people: ownership, responsibility, and clarity around who makes decisions, what data those decisions depend on, and who is accountable when something goes wrong

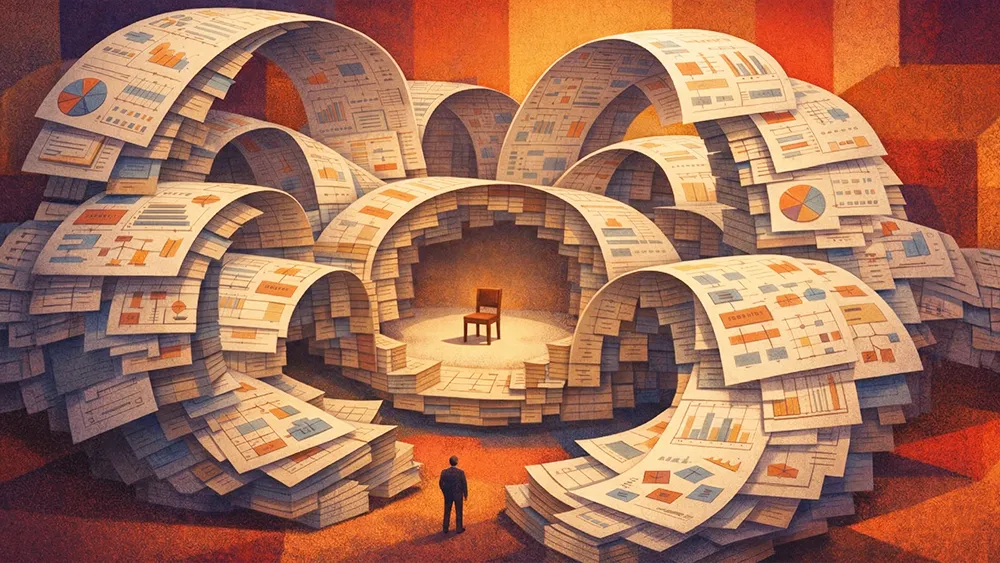

Most data governance programs are built around the wrong problem. Organizations invest in catalogs, lineage tools, and policy documents, then discover that none of it tells anyone what to do when something actually goes wrong. The documentation describes the system. It does not describe the behavior. When a model produces a bad output or a data source degrades, teams default to debating ownership rather than executing a response. The gap is not technical. It is structural.

Ivan Ruskevich, Principal Consultant at RaspberryTea.ai, advises fintech and mobile gaming companies on risk analytics, fraud prevention, and data-driven product strategy. His background includes portfolio management and end-to-end decision systems in fintech at DYNINNO, as well as mobile game monetization at Hypercell. That dual perspective, building production models and training the people who will inherit them, shapes a view of governance that starts with humans, not infrastructure.

"Data governance is not really about the data, and even less about the tools. It's about people: ownership, responsibility, and clarity around who makes decisions, what data those decisions depend on, and who is accountable when something goes wrong," says Ruskevich. His framework reduces governance to three questions that most organizations never answer. Who makes the decision? Which specific data does that decision depend on? And who is responsible if it goes wrong? Without clear answers, governance programs produce documentation that lives separately from the business process it is supposed to govern.

Clarity over control: Ruskevich draws a distinction between governance as a control layer and governance as a clarity layer. "We introduce data governance policy not to control, but to understand what's actually happening," he says. "Understand our processes and clear out who's responsible, and what our action plan is in case something happens." The shift is practical. A control-oriented program generates checklists. A clarity-oriented program generates pre-designed action plans for every foreseeable failure mode, the way military planning accounts for scenarios that may never occur.

Explainability by design: Ruskevich's fintech work provides the clearest proof point. In regulated lending, a central bank can demand an explanation for any individual model decision. That regulatory pressure forces a trade-off that many organizations outside of finance never confront. "We make our AI systems explainable by design. Sometimes we sacrifice some precision, but it should be efficient enough and still explainable," he says. The deciding factor is the cost of making a mistake. In a machine learning competition, a wrong prediction means nothing. In a credit decision or a health diagnosis, the cost is severe. That calculus, not the model's complexity, should determine how much explainability the system requires.

Distributed ownership: When it comes to who should own governance, Ruskevich argues against centralizing it under one function. "Not one person owns everything, but several people are responsible for their own segments," he says. Some own dashboards and reporting. Some own risk policy. Some own fraud prevention. IT owns the backend. The critical executive responsibility is making sure those owners work together rather than against each other. "One of the essential things is to prevent competition between these responsible persons. If competition is allowed, it will happen."

The checkbox trap: Governance also fails when it becomes a compliance exercise disconnected from business outcomes. Ruskevich frames this as a purpose problem. "In a risk department, the purpose is not risk. It's revenue," he says. The same logic applies to governance. If the primary goal is to make decisions clearer and more transparent, governance becomes useful. If the goal is filling out forms, it becomes the kind of performative exercise that teams brush aside until something breaks.

AI makes all of these weaknesses worse, faster. Ruskevich describes it as a catalyst. Not a solution to governance problems, but an accelerant that exposes unowned decisions and process gaps at a speed that organizations cannot ignore. "If we don't have clarity and we introduce AI, we just speed up the discovery that our processes are not owned by someone," he says. For organizations that have already done the foundational work of assigning ownership and designing action plans, AI amplifies capability. For those who haven't, it amplifies disorder.

Ruskevich is candid that his own views continue to evolve. "Before large language models appeared, I spent much time learning how to build AI. Now I also spend much time learning how to use AI," he says. "I advise everyone to be open to changes and not consider today's views as something final." For governance, the implication is the same. The frameworks must be designed to adapt, because neither the decisions nor the tools are standing still.