All articles

Is Cost Per Task Becoming The Only Metric That Matters?

Robert Alvarez, Senior AI Solutions Architect at Everpure, explains why DeepSeek v4 signals a shift from model performance races to infrastructure efficiency, and why 2026 is the year enterprises confront that AI's real bottleneck has moved from models to the systems underneath them.

We're now getting to a plateauing of benchmarks where cost per task is really going to be the driver for switching. I'm curious to see how well not just DeepSeek, but all of the other open-source models start to compete against the frontier models in this space.

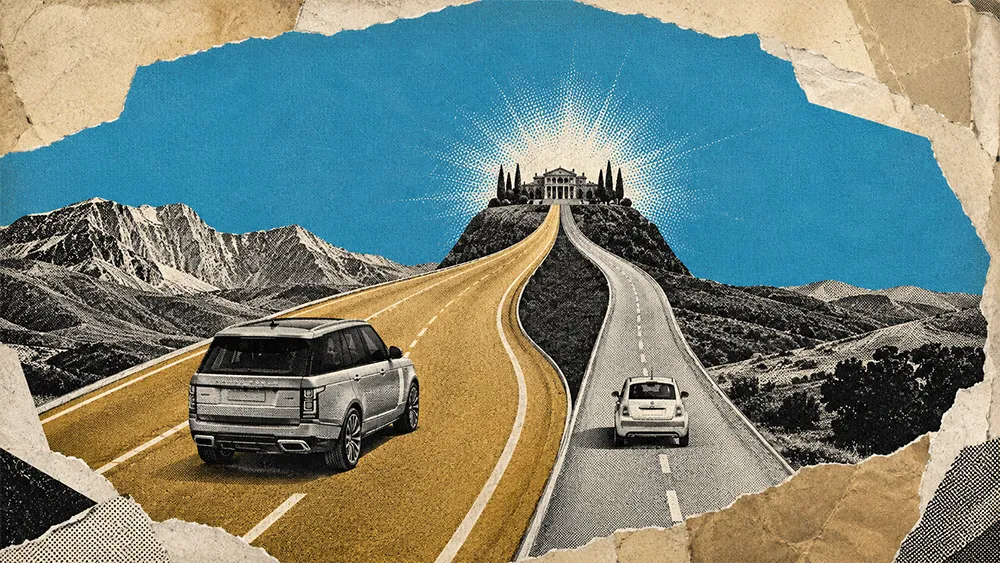

The performance gap between frontier and open-weight AI models is closing. The cost gap is not. When a self-hosted model produces comparable results at a fraction of the token price, paying premium API rates for marginal leaderboard gains stops making business sense. DeepSeek's latest release and the broader open-weight movement are forcing that calculation into the open, and enterprises paying attention are already restructuring how they route, cache, and serve AI workloads. With DeepSeek approaching a $50 billion valuation, the signal is clear: cost-efficient AI at scale has serious commercial backing.

Robert Alvarez is Senior AI Solutions Architect at Everpure, where he works on AI-ready data pipelines, KV caching infrastructure, and enterprise deployment architecture. Before Pure Storage, he spent four years as an AI Solutions Architect at NASA's Frontier Development Lab and held data science roles at Intel. He holds a PhD from Arizona State University.

"We're now getting to a plateauing of benchmarks where cost per task is really going to be the driver for switching. I'm curious to see how well not just DeepSeek, but all of the other open-source models start to compete against the frontier models in this space," says Alvarez.

The architecture split

Alvarez sees enterprise AI splitting into two tiers: premium frontier models handling orchestration and reasoning, and cheaper open-weight models running the repetitive execution work. Agentic workflows are where this plays out most clearly.

"Agentic workflows don't necessarily need a very large reasoning model. You need the reasoning model for the orchestration, but not for the task itself," Alvarez says. "Send the reasoning to the cloud API, pay a premium on the best frontier model, but then all the agentic workflows will be at a fraction of that cost because they'd be done by a different model that is equally good." If a comparable open-weight model runs at half the token cost, the only question is whether it can sustain focus on longer-running tasks the way frontier models currently do.

DeepSeek v4 introduces highly compressed attention that Alvarez says outperforms recent compression approaches from Google. "What this allows you to do is get faster time to first token. It allows you to have longer context windows and support higher batches because you don't need as much KV cache," he says. The practical result: "You can do more with the exact same infrastructure you currently have. You don't need to buy more." With GPU and NAND supply chains increasingly constrained, doing more with existing hardware is not a preference. It is a requirement.

KV caching as the infrastructure battleground

Alvarez describes KV caching as "a gift to storage" because it moves a core inference bottleneck from the compute layer into the data management layer, exactly where storage architecture can make a measurable difference.

Everpure's KVA product serves KV caches across an entire AI fleet rather than locking them to individual GPUs. "When you get a spike in usage and need to transfer a workflow elsewhere, you can also reroute that cache," Alvarez says. "Before, it was just stuck to that GPU." Combined with compression, less data moves over the wire, and time to first token improves.

Alvarez argues that for most enterprise use cases, fine-tuning is no longer necessary. "With a really good prompt, unless you're working with highly specialized data like SEC filings, fine-tuning is not necessary," he says. The shift pushes evaluation toward enterprise-specific benchmarks. "I would wager that 99% of people who look at SWE-bench cannot name a single task that it does," he says. "Have you tested it on the things you care about?"

2026 is the year the bottleneck moved

Alvarez frames 2026 as a turning point. For the first time, AI models got more expensive not because they improved dramatically but because the infrastructure to serve them became the constraint.

"There are no new data centers to support the growing capacity," Alvarez says. "If you think of raw data to generated output as an assembly line, we're now going to start doing the sub-optimizations." The focus shifts from model selection to end-to-end infrastructure design: how tokens move through the system, where caches live, how routing decisions get made, and how every layer from storage to compute contributes to throughput.

Whether DeepSeek v4 delivers on its full promise or falls short, the underlying pressure remains. Enterprises are being forced to measure AI productivity against budget, not benchmarks. "How much more productive are you at this given budget? That is a different constraint now," Alvarez says. The race for the best model is giving way to the race for the most efficient system. And that race runs through infrastructure.