All articles

Why Financial Services Data Engineers Choose Orchestration Over Model Power

Ajinkya Chatufale, Lead Data Engineer at Barclays, on why enterprise AI reaches production only when teams orchestrate use cases, models, agents, protocols, and human review as one system.

The real challenge isn't deploying AI. It's orchestrating models, data, and workflows so they actually work together in production.

The next enterprise AI bottleneck sits above the model layer. As teams move past pilots, the hard problem is coordinating models, agents, retrieval layers, tools, and human review into systems that hold up in production. That architectural work now decides whether AI becomes an operating capability or another stalled demo.

Ajinkya Chatufale is a Lead Data Engineer at Barclays, the British universal bank, and an applied AI and cloud data architect with more than a decade of experience across AWS, GCP, and Azure. His background spans data platforms, real-time pipelines, governance, and GenAI systems, which gives him a practical view of what breaks when AI leaves the sandbox and enters enterprise workflows.

"The real challenge isn't deploying AI. It's orchestrating models, data, and workflows so they actually work together in production," says Chatufale. For Chatufale, that orchestration starts earlier than most teams think. Before model selection, teams need to decide whether a problem actually needs AI at all, a discipline that sits behind stronger agentic AI foundations and the broader push to embed AI inside real workflows rather than treat it like a sidecar tool.

Start with the use case: Chatufale says AI creates the most immediate value by compressing early-stage work such as domain research and requirements gathering. In regulated industries, work that once takes a week can become a much faster first pass. "AI doesn't replace engineers, it compresses timelines and amplifies how fast we can deliver." But he warns that speed only matters when teams separate deterministic tasks from problems that truly require generative or agentic systems, a line many failed enterprise AI rollouts still miss.

Give each model one job: His clearest example is a multi-agent code review pipeline. Legacy Python code in GitLab gets routed to separate agents for code review, performance analysis, and security checks. A reporting agent consolidates the findings, then a human reviewer decides whether a fix agent should change the code. "Multi-agent systems work best when each model has a clear role, not when everything shares the same 'brain.'" That approach mirrors the move from one assistant to a fleet of agents, and the growing view that teams should think of agents like team members with defined scope, handoffs, and accountability. It also lines up with newer multi-agent research systems that improve performance by distributing work in parallel.

That same logic extends into production operations. Chatufale points to agents that monitor pipelines, retrigger failed jobs, surface probable root cause, and notify developers or stakeholders before a missed SLA turns into a larger incident. The point is not autonomy for its own sake. It is faster response inside a controlled architecture.

Ground the system before it scales: Chatufale uses retrieval-augmented generation to keep answers tied to enterprise data, and he sees the push toward stronger AI factory architectures as a response to the same production problem. He also points to Model Context Protocol and lessons from running MCP tools in practice as part of the next standardization layer around tool access and service integration. "Standardization, whether through protocols, models, or architecture, is what turns complex AI systems into something scalable and reliable."

Keep humans where judgment matters: "You can't rely entirely on AI because human judgment is still critical to catch hallucinations and guide outcomes." Chatufale keeps human review over generated reports, code fixes, and domain-specific outputs for exactly that reason. As orchestration becomes a core enterprise architecture layer, that judgment becomes more important, not less, especially as debates over accountability in AI workflows and emerging agent standards move closer to production reality.

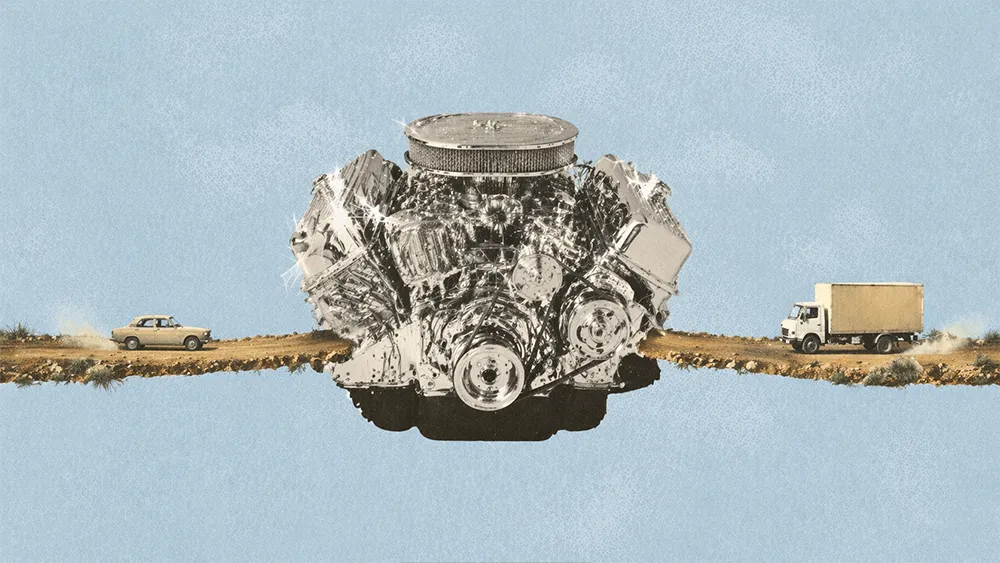

For Chatufale, the integrated platform versus point solution debate has no fixed answer. The right architecture depends on the use case, the sensitivity of the data, the cost profile of the workload, and the need for domain grounding. Heavyweight models belong on the hardest reasoning tasks. Smaller models belong on narrower jobs. Tools support the model, but they do not replace architectural judgment.

That is why he sees enterprise AI maturing around modular, standardized systems rather than vendor absolutism. Teams that understand when to use agents, when to stay deterministic, and where to keep humans in the loop will move faster than teams forcing AI into every problem they see. In the end, modern AI success depends less on model power than on whether the system around that model is built to work.