All articles

AI Performance Standard Shifts Toward Contextual Training Data and Away From Generalism

Will Rowlands-Rees, Chief AI Officer at Lionbridge, says enterprise AI performance now hinges on context-rich training data, validation rigor, and deliberate bias control.

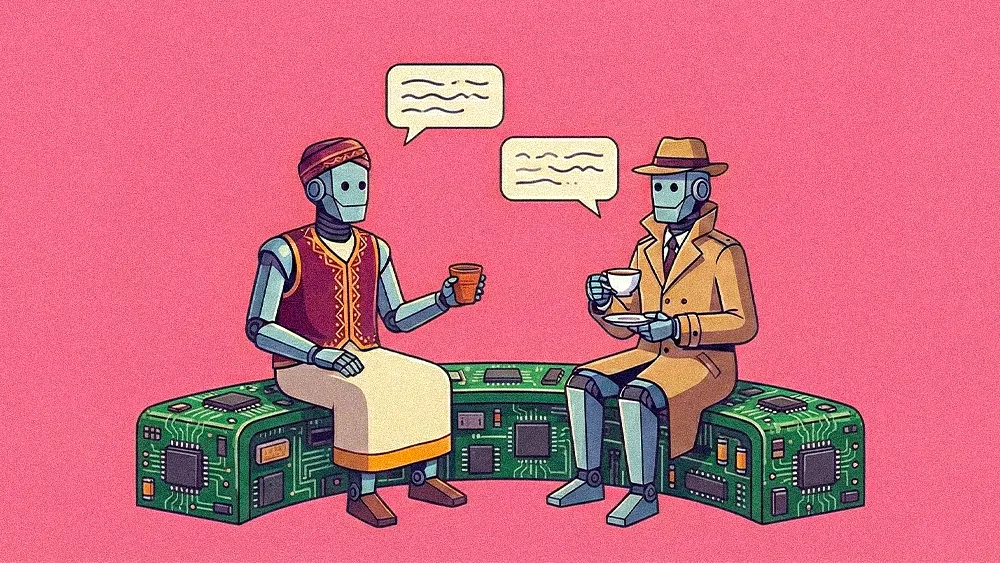

It’s not enough to say you’ve trained a model on English or that you’ve trained it on Hindi. If your device needs to understand a conversation in India, it has to understand 'Hinglish.'

Enterprise AI’s advantage now lives in context. The companies pulling ahead are building data architectures that reflect how people actually speak across markets, switch between languages, and respond to cultural cues. Cultural intelligence starts in the training data and is reinforced through rigorous validation. When nuance is missing, models misfire. As foundation models become widely accessible, contextual depth is what turns AI from functional into truly differentiated.

Will Rowlands-Rees, Chief AI Officer at the translation and localization company Lionbridge, approaches the issue from a data-first lens shaped by years leading global AI and product organizations, including senior roles at Thomson Reuters. He believes that cultural AI succeeds or fails at the data layer. Enterprises that treat localization as a surface-level feature miss the deeper requirement to rethink how training data is sourced, structured, and validated across markets.

He points to India as a clear illustration of the problem, where everyday speech fluidly blends Hindi and English into what’s commonly called 'Hinglish.' "It’s not enough to say you’ve trained a model on English or that you’ve trained it on Hindi. If your device needs to understand a conversation in India, it has to understand 'Hinglish,'" says Rowlands-Rees. "That’s the intersection where people are switching between the two languages as they speak, and that is what you need to make the product work." The broader lesson is that training data must reflect how people actually communicate in practice, not how languages are neatly categorized on paper.

Validation gap: He says companies often hold AI to a higher standard than humans, demanding near perfection before deployment, which slows progress and amplifies risk aversion. At the same time, poorly executed cultural nuance can create what he calls a “really negative experience,” making rigorous, in-market validation essential. “It’s not enough to ask your 55-year-old, seasoned marketeer in Japan if a product will work for the youth there. You have to get it validated and tested by people who are actually in that demographic. They are the ones who will tell you whether the experience resonates or not.”

Encoded assumptions: But operational discipline alone doesn’t solve the deeper issue of bias, he notes. He illustrates the issue of bias embedded in training data with a simple prompt experiment: Ask an image model in English to generate a “successful entrepreneur” and it often produces a suited executive in a high-rise office. Ask the same question in Spanish and it may return a collaborative group in hoodies around a whiteboard. The divergence reflects the worldview encoded in the data, not neutral intelligence. “The most important thing about bias is knowing it’s likely to be there. Once you know that, you can decide whether to prompt through it, train through it, or simply accept it because it aligns with your point of view. The key is to understand the bias and then make a deliberate choice.”

Even with the right data strategy in place, Rowlands-Rees says the work stalls without enterprise-wide alignment. Cultural intelligence cannot live inside a CX roadmap or sit with a single executive sponsor. It has to be treated as a business priority that spans go-to-market, product, technology, and customer experience. Otherwise, initiatives fracture at the implementation stage and localized AI features ship without the rigor required to protect brand trust. “If you don’t have everyone on board and everyone aligned that it’s a problem worth solving, you’re only going to solve it badly. And if you solve it badly, it’s almost worse than not solving it at all.”

The race for relevance: The urgency is most visible in B2C environments, where shrinking attention spans redefine the cost of irrelevance. In markets measured in moments, generic experiences rarely survive first contact. “If you don't have some form of personalized experience, there's a good chance that you may not be in business in five years. The shift between this becoming a differentiator and a cost of business is probably already happening. So it's not a choice as to whether to do it. It's a choice as to how to do it.”

Rowlands-Rees closes where he began, returning to the central condition for success. Cultural intelligence demands executive conviction, operational discipline, and shared ownership across the business. Without that foundation, investment turns into exposure. “There needs to be an acceptance that this is a problem worth solving, and it's not a CX problem. It is a business problem that everyone needs to be invested in solving. If you do that and then articulate the problem, you've got a fighting chance of being successful," he concludes. "And if you don't, you're going to spend a lot of money and deliver a suboptimal experience that, when released, will probably be detrimental to your brand.”